The Agentic AI Digest (4 Dec) | Production-Grade Error Handling, Vertex Vector Search 2.0 & Agent UI Stacks

This week: We look at building a robust API wrapper for Gemini with exponential backoff and retry logic, and highlight the new Vertex AI Vector Search 2.0 architecture and the emerging “Frontend for A

Hi everyone,

Welcome to your weekly briefing from the Agentic AI Roundtable. Our goal is to cut through the noise and deliver the most relevant signals, patterns, and community wins to help you build more effectively.

Let’s dive in.

📒 From the Workbench: Patterns to Pocket

Stop Writing Fragile API Calls: A Guide to Production-Grade Gen AI Error Handling and Retries

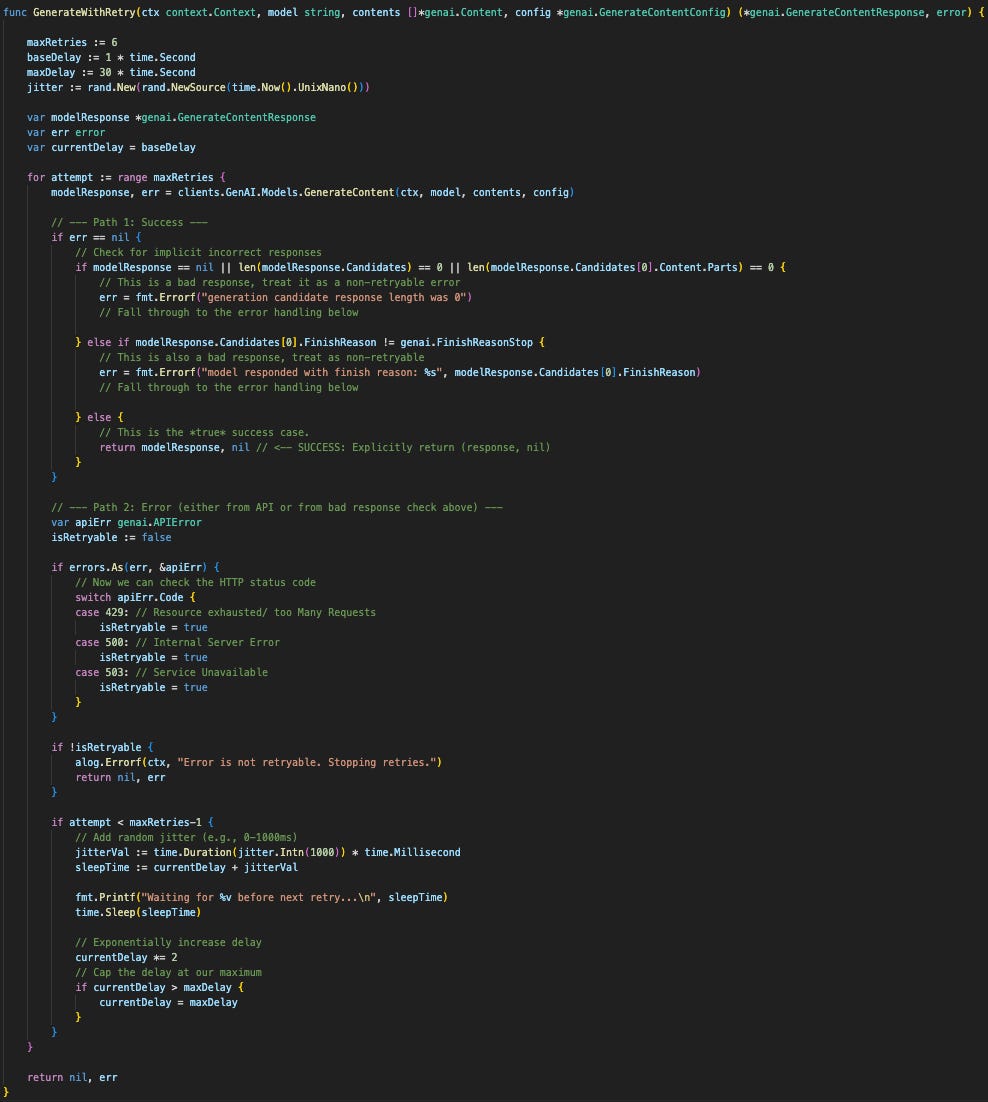

Previously, we covered concurrent processing with gemini. We mentioned that making concurrent requests increases the chance of hitting rate limit errors. To handle this, we’ve found that wrapping the API call in a function that handles both error handling and retry logic works well. This week, we’ll cover this wrapper.

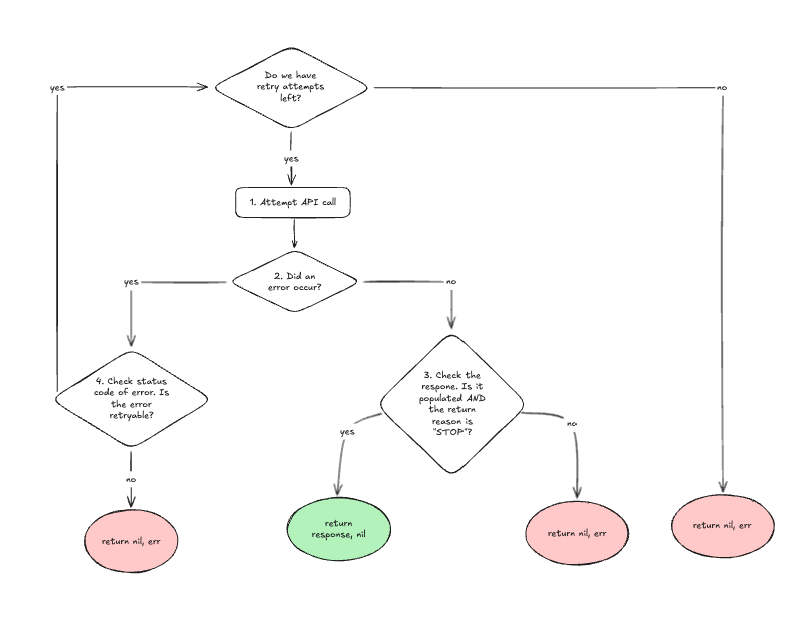

The Wrapper’s Logic Flow

At a high level, the function operates within a loop that runs for a maximum number of retry attempts. Each attempt inside the loop follows this logic:

Attempt the Call: We try the

GenerateContentrequest.Check for Errors: We then check if the call returned an immediate error.

If NO error occurred (Path to Step 3): We must inspect the response to guard against “implicit” failures.

True Success: If the response is populated AND the finish reason is “STOP”, we return the

responseand anilerror.Implicit Failure: If the finish reason is anything other than “STOP”, it’s not a true success. We convert this into an

errorand returnnilfor the response.

If an error DID occur (Path to Step 4): We classify the error.

Non-retryable Error: If the error is fundamental (like a 400 Bad Request), we stop immediately and return the

error. There’s no point retrying a request that’s invalid.Retryable Error: If the error is temporary (like a 503 Service Unavailable), we calculate a wait time, sleep, and let the loop continue for another attempt.

Handling Two Types of Failure

This wrapper’s real power comes from its ability to distinguish between two kinds of errors:

1. Explicit Errors (The Obvious Failures)

These are the errors that return err != nil. The network connection dropped, the API client timed out, or the server returned a 4xx or 5xx status code.

But not all errors are created equal. We must only retry transient errors.

Non-Retryable: A

400 Bad Requestmeans our prompt is malformed. Retrying the same request will fail every time.Retryable: A

429 Too Many Requestsor500 Internal Server Erroris temporary. The server is telling us, “I can’t handle this right now.”

We use errors.As to inspect the genai.APIError type and check its code. We only set isRetryable = true for codes like 429, 500, and 503.

2. Implicit Errors (The “Silent” Failures)

This is the most critical and often-missed part of API handling. An “implicit error” is when the API call succeeds (err == nil) but the response is useless.

Our wrapper checks for two of these:

Empty Response: The API returns

nilor an emptyCandidateslist. This is a failure.Wrong Finish Reason: The GenAI model ran but didn’t complete. For example, it might return

FinishReason: SAFETY(it was safety-blocked) orFinishReason: MAX_TOKENS(it ran out of room).

In these cases, our code smartly converts this “successful” call into a non-retryable error, because retrying the same prompt will just get safety-blocked again.

⏳ Why We Wait: Exponential Backoff with Jitter

When we do decide to retry, we can’t just slam the server in a tight loop. We need to back off politely. Exponential Backoff is a strategy where we increase our wait time exponentially after each failure.

Wait 1 second, then retry.

Fail? Wait 2 seconds, then retry.

Fail? Wait 4 seconds, then retry.

Fail? Wait 8 seconds, then retry.

This gives the overloaded server time to recover. Our code does this by starting with a baseDelay and doubling it on each attempt (currentDelay *= 2), up to a maxDelay.

Jitter is the secret ingredient. If all of concurrent processes get a rate-limit error at the same time, they’ll all retry at 1s, 2s, and 4s, hammering the API at an almost-synchronized interval. Jitter prevents this by adding a small, random amount of time to each wait (sleepTime := currentDelay + jitterVal). This staggers the retries, spreading the load and giving the server a much better chance of recovery.

By wrapping our client this way, we’ve moved all this complex logic out of our main application and created a simple, robust, and resilient function.

📡 On the Radar: What’s Moving the Needle

A curated look at the articles, papers, resources and updates that are worth your time this week.

Vertex AI Vector Search 2.0 arrives. A significant architectural shift for RAG pipelines, the new “Vector Search 2” moves from managing raw indexes to “Collections” that store both vectors and source data together. It’s fully serverless and supports hybrid search (keywords + vectors) out of the box.

The “Frontend for Agents” stack is solidifying. We are seeing a rapid maturity in how agents render interfaces. The AG-UI protocol is an emerging standard for streaming agent-user interactions (state, tool calls, and interruptions), while the A2UI project is defining the declarative schema for “Generative UI”, allowing agents to render native components (forms, cards) rather than just text.

Google Workspace Studio is now GA. Google has rolled out its no-code workflow builder to General Availability, integrating it directly into its productivity stack. It allows non-technical users to build agents that orchestrate work across Gmail, Drive, and Chat using natural language descriptions, effectively bringing the “agentic” capabilities of Gemini to the everyday workforce.

🤝 Want to Get Involved in the Community?

This roundtable is driven by its members. To join the conversation, share your work, or ask a question, you have two great options:

Join our private Google Chat space for real-time discussions and to participate in the weekly Open Thread. [Link to Chat Space]

Send a message to our community Google Group at roundtable-community@agentic-ai.build.

We look forward to hearing from you.

The Agentic AI Roundtable Core Team