The Agentic AI Digest (30 Oct) | Google Search Grounding, Code Sandbox & Agent Memory Bank

This week: We look at building a fact-checker with Google Search grounding, and highlight the new Code Sandbox and Memory Bank capabilities in the Vertex AI Agent Engine.

Hi everyone,

Welcome to your weekly briefing from the Agentic AI Roundtable. Our goal is to cut through the noise and deliver the most relevant signals, patterns, and community wins to help you build more effectively.

Let’s dive in.

📒 From the Workbench: Patterns to Pocket

Building a Fact-Checker with Google Grounding

Last week, we explored Gemini’s built-in URL Context tool, which lets the model “read” the contents of a specific link. This week, we’ll add a powerful partner to the mix: Grounding with Google Search.

This tool allows Gemini to perform Google searches to support its responses. By itself, this is great for:

Accessing real-time information (e.g., “What’s the score of the game right now?”).

Checking factual accuracy against public web data.

Providing inline citations for its claims, so you know where it got its information.

To showcase its usefulness, we’ll combine it with last week’s URL Context tool to create a “fact-checker” rpc method that receives a specific website url (to an article or post), and outputs a report that validates the information from the given website, complete with inline citations to relevant web sources. Since we have the Agentic AI digest example repository set up, you’ll need to visit the repos for the complete code:

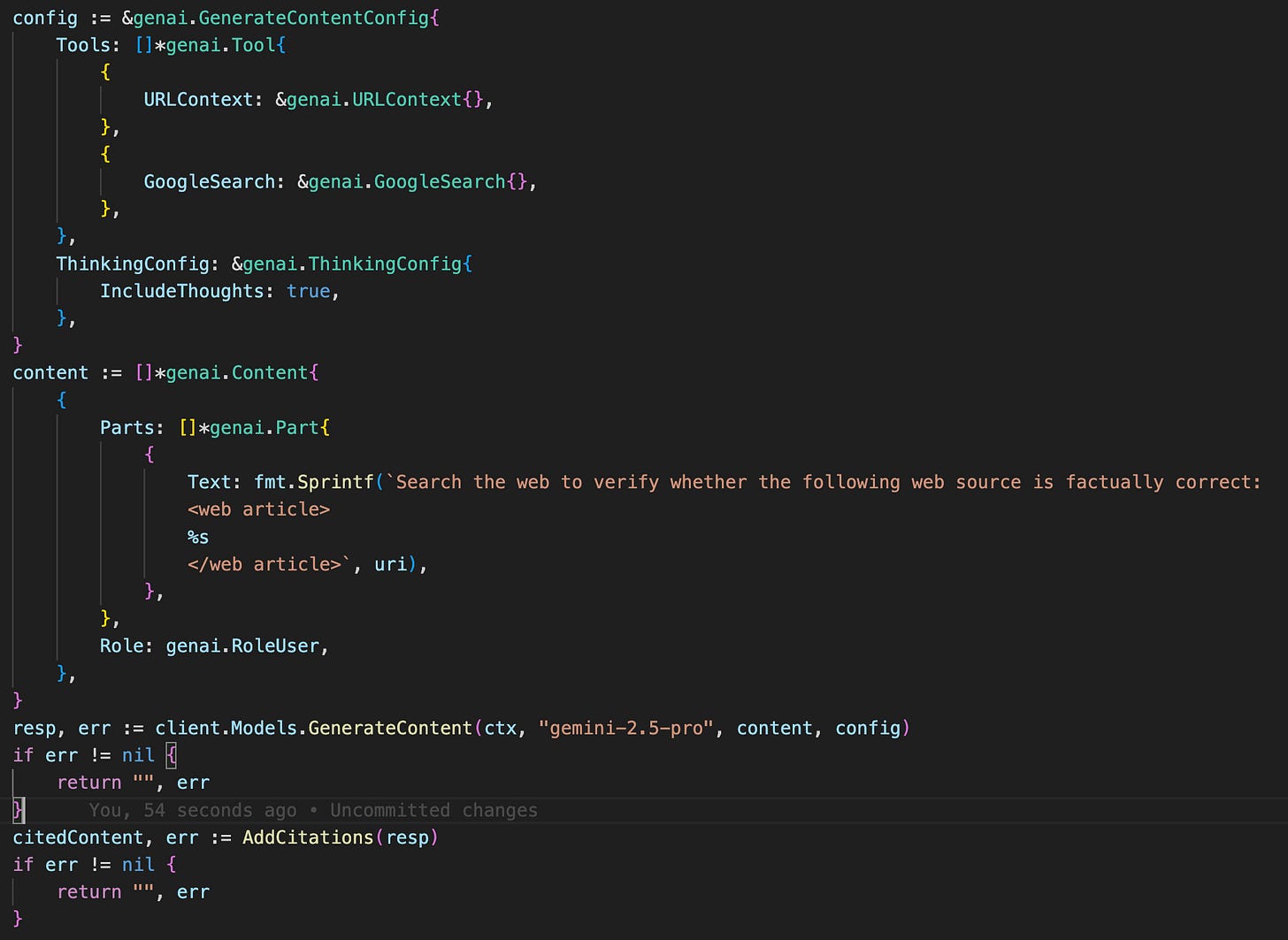

In the Code: Configuring the Tools

The method is pretty simple:

we just need to tell Gemini to use both tools. We do this in the generation config of our request.

Our prompt then explicitly asks the model to perform the fact-checking task.

After calling GenerateContent, we need to handle the response to insert inline citations.

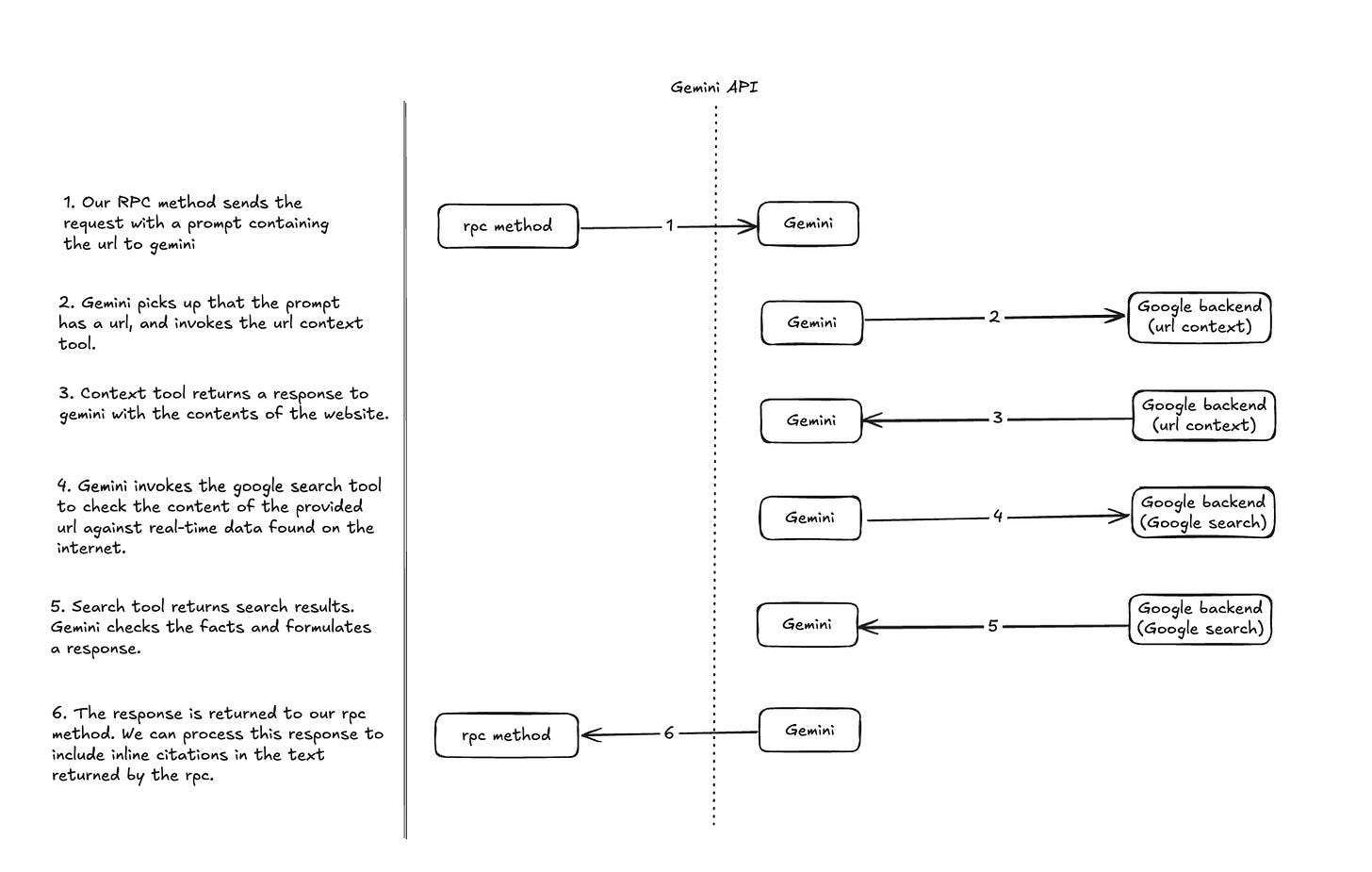

Behind the Scenes: The Multi-Step Flow

The use of gemini’s default tooling significantly simplifies our rpc method, since we only need to specify the tools in the config. Once we send our single request to the Gemini API, a sophisticated, multi-step process happens entirely on its backend, which we’ve diagrammed out:

Handling the Response: Building Citations

That final response is special. Because we enabled google search, the candidate in our response now contains a GroundingMetaData object. This object is what gives us the power to cite sources. It contains three key pieces:

webSearchQueries: An array of the exact search queries Gemini used. This is invaluable for debugging and understanding the model’s reasoning.

groundingChunks: An array of web sources (with URI and title) that the model found and used.

groundingSupports: This is the most important part. It’s an array that links segments of the model’s text (defined by a startIndex and endIndex) directly to one or more of the groundingChunks.

This metadata is the key to building inline citations. The model’s text doesn’t come with citations pre-formatted. We have to build them ourselves using this metadata. We’ve included our implementation in the repo.

Testing it out

I tried the RPC on a reddit post claiming that Gemini 3.0’s release date was leaked. It responded with:

The web article from Reddit you cited is **factually incorrect**.

The Reddit post claimed that Google Gemini 3 would be announced on Wednesday, October 22, 2025, with a release date of November 12, 2025. However, there was no official announcement from Google on that date.

While there were leaks and rumors circulating in mid-October 2025 that pointed to a potential October 22 announcement, these were met with skepticism and ultimately proved to be inaccurate [1][2] [3] [4]. In fact, some sources suggested that Google might adhere to a December launch schedule, which would be in line with previous Gemini releases [2].

What has been confirmed is a statement from Google CEO Sundar Pichai at the Dreamforce conference in October 2025, where he mentioned that Gemini 3.0 would be released later in 2025, without specifying a date [5], [6]. As of October 23, 2025, some users have reported seeing upgrade notices to “3.0 Pro,” suggesting a quiet rollout is underway, but there has been no formal announcement event as the Reddit post claimed [7], [8].

📡 On the Radar: What’s Moving the Needle

A curated look at the articles, papers, resources and updates that are worth your time this week.

Google announced Code Execution, a new Code Sandbox tool for the Vertex AI Agent Engine. This built-in, sandboxed environment allows agents to safely execute Python code, unlocking new capabilities for on-the-fly data analysis, mathematical computation, and complex data manipulation. It’s accessible as a built-in tool or via the

AgentEngineSandboxCodeExecutorclass in the Python ADK. Learn more on Google.devThe Vertex AI Agent Engine also received a significant upgrade with the new Memory Bank service. This managed feature allows agents to generate, store, and recall long-term memories from user conversations, enabling developers to build more personalized, stateful, and context-aware interactions that persist over time.

🤝 Want to Get Involved in the Community?

This roundtable is driven by its members. To join the conversation, share your work, or ask a question, you have two great options:

Join our private Google Chat space for real-time discussions and to participate in the weekly Open Thread. [Link to Chat Space]

Send a message to our community Google Group at roundtable-community@agentic-ai.build.

We look forward to hearing from you.

The Agentic AI Roundtable Core Team