The Agentic AI Digest (19/09)| Building A2A Agents with gRPC & Tuning Model 'Thinking'

This week: Get hands-on with gRPC for agent discovery, tune models for deeper reasoning, and see how the new Agents to Payments protocol is a game-changer.

Hi everyone,

Welcome to your weekly briefing from the Agentic AI Roundtable. Our goal is to cut through the noise and deliver the most relevant signals, patterns, and community wins to help you build more effectively.

Let's dive in.

🛠️Community Commits: Building in the Open

This week’s “Watch Me Build” takes the Agent2Agent (A2A) protocol off the whiteboard and into the IDE. We begin our practical journey of creating an A2A server from scratch, using GoLang and the gRPC A2A transport as the backbone for our agent implementation.

Join us as we configure a gRPC server, register the A2A service, implement and test the GetAgentCard RPC method—the key to making your agent discoverable by user and agent clients.

Building Production Ready A2A Agents: gRPC Server Setup in Go

Useful links

📒 From the Workbench: Patterns to Pocket

The power of “thinking” for better AI responses

For complex tasks, a great prompt is only part of the solution; you also need to tune the model. For the next few weeks, we'll be covering model configuration selection. This week's tip is about the thinking budget, a powerful parameter available on some Gemini models. It allows the model to perform a multi-step, internal reasoning process before generating a final response.

What it is: "Thinking" is a form of Chain-of-Thought reasoning that happens within the model's architecture. It functions like an internal scratchpad, where the model breaks down a problem, plans its approach, and works through the steps before producing a final output. Gemini API’s way of exposing this internal process for debugging and transparency is to output the thoughts as a separate response before the final response when the include_thoughts parameter is set to true.

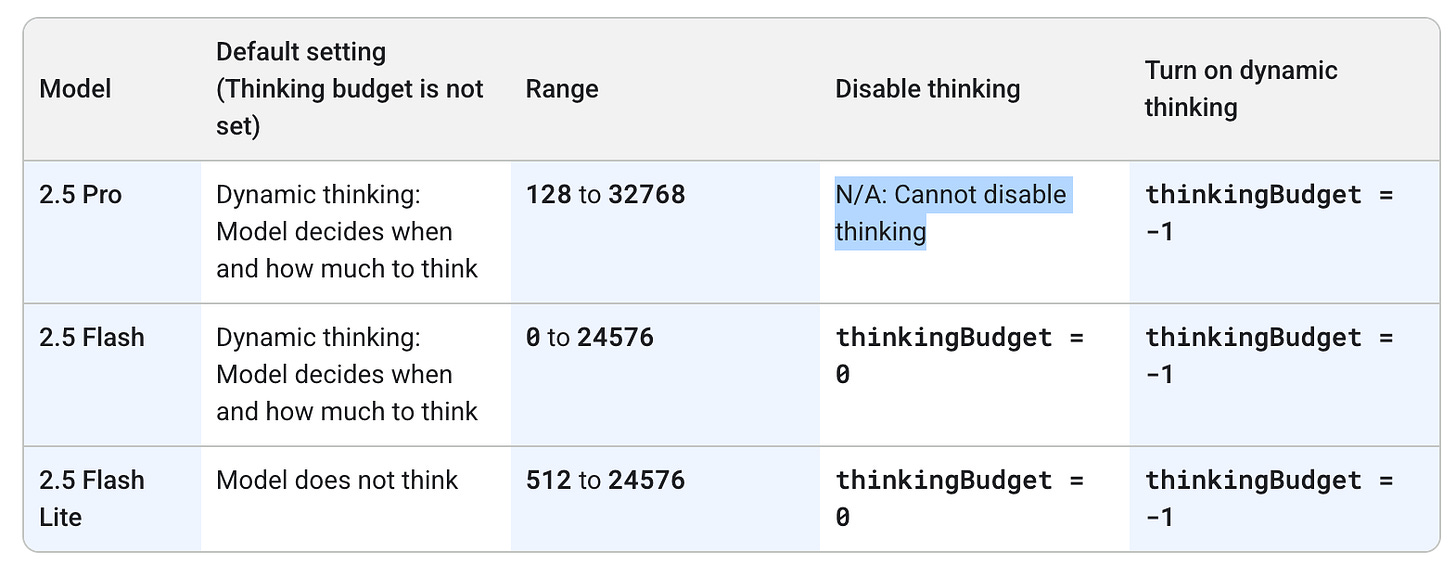

Note that the Gemini models handle have different limitations and default configurations, as summarized in the table below:

Benefits of enabling thinking:

Improved Accuracy: Reduces hallucinations and improves problem-solving for difficult tasks.

Higher Quality: Leads to more structured, well-reasoned, and coherent responses.

Drawbacks of enabling thinking:

Increased Latency: The additional processing time for thinking can slow down response times.

Higher Cost: The internal thinking process consumes more tokens, increasing your API costs.

When to use it: Use thinking for any task that requires advanced reasoning, such as solving multi-step math problems, generating complex code, or summarizing long, nuanced documents. For simple, factual queries where low latency is critical (e.g., "What is the capital of France?"), it’s best to disable thinking to save on cost and speed.

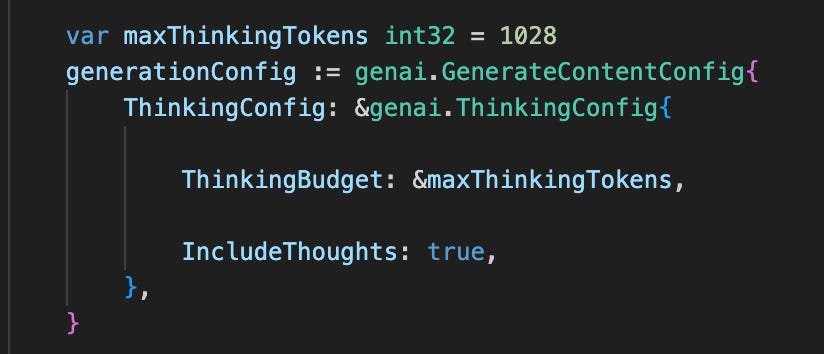

How to enable it: Below is an example of specifying the thinking configuration with the Gen AI Golang SDK.

Further Resources:

Official Google Documentation on Thinking: https://ai.google.dev/gemini-api/docs/thinking

Genai Go SDK: https://pkg.go.dev/google.golang.org/genai#section-readme

📡 On the Radar: What's Moving the Needle

A curated look at the articles, papers, resources and updates that are worth your time this week.

💳 Agents Get Their Own Wallets: The dream of autonomous agents that can transact on our behalf just took a major step forward. Google Cloud and Coinbase have announced the Agents to Payments (AP2) protocol, a framework designed to enable agents to securely conduct transactions. This could unlock use cases from personal shopping assistants to automated travel agents.

See the official Google announcement of AP2, Coinbase's announcement, and a powerful demo of it in action.

📈 Automating Economic Research: For the economists in our community, a new paper from the National Bureau of Economic Research (NBER) explores how AI agents can be used to automate and scale economic research. It’s a fascinating look at how agentic systems can be applied to complex analytical and simulation tasks in a scientific domain.

✨ The Rise of "Vibe Coding" and its Realities: Agents that translate natural language descriptions into functional UIs—"Vibe Coding"—are gaining serious traction. For those looking to peek under the hood, new open-source implementations like Open Lovable and Vercel's Opensource V0 provide a fantastic window into their agentic loops and prompting. However, it's not all smooth sailing. This piece from SaaStr breaks down the significant security challenges that these prosumer tools still face.

🔓 New Attack Vectors for Agentic Systems: As we give agents more capabilities, we also create new security risks. Prompt engineering can be used for more than just getting better outputs; it can become a vector for data exfiltration. Zenity Labs has released two must-see demonstrations showing how these attacks play out in the real world, first through a compromised Copilot Studio agent and then via a simple Jira ticket.

🤝 Want to Get Involved in the Community?

This roundtable is driven by its members. To join the conversation, share your work, or ask a question, you have two great options:

Join our private Google Chat space for real-time discussions and to participate in the weekly Open Thread. [Link to Chat Space]

Send a message to our community Google Group at

roundtable-community@agentic-ai.build

We look forward to hearing from you.

– The Agentic AI Roundtable Core Team