The Agentic AI Digest (03/10)| Giving Your Agent Its Tools & Choosing Between Gemini Pro & Flash

This week: We connect tools to our Go agent and build the agentic loop, break down the trade-offs between Gemini Pro and Flash, and explore the critical shift from prompt to context engineering.

Hi everyone,

Welcome to your weekly briefing from the Agentic AI Roundtable. Our goal is to cut through the noise and deliver the most relevant signals, patterns, and community wins to help you build more effectively.

Let’s dive in.

🛠️ Community Commits: Building in the Open

The A2A agent build continues! After setting giving our agent a brain last week, it’s time to give our agent its tools. This week’s “Watch Me Build” covers connecting tools to your agent and setting up the agentic loop.

Watch Me Build: A2A Agent in Go - Part 4: Tool Registration and Usage

Useful links

📒 From the Workbench: Patterns to Pocket

This week, we continue our series on generation configuration settings by focusing on one of the most critical choices: model selection. The Gemini API provides access to several models. To keep this discussion concise, we’ll assume that text generation is the goal, and consider only the Pro and Flash variants. Leveraging the appropriate model for your application is key to balancing performance, latency, and cost. As per usual, we’ll start with a theoretical explanation of the differences between flash and pro, then move towards practical application.

Model training and architecture differences

1. Gemini 2.5 Pro: Engineered for Maximum Capability

Gemini 2.5 Pro is the high-performance, flagship model. It consistently achieves top-tier results on industry benchmarks (e.g., MMLU, GPQA) that measure advanced reasoning, mathematics, and multimodal understanding. However, it is much slower and more expensive than flash.

Architecture & Model Size: Pro is a large-scale model with a very high parameter count.

Training Sequence: Pre-training -> supervised fine tuning (SFT) and reinforcement learning with human feedback (RLHF).

Pre-training: is a form of self-supervised learning where the model is trained to predict the next token (e.g., word or piece of a word) in a sequence, learning grammar, facts, and reasoning patterns from a massive dataset of text and code (typically publicly available data from books and the internet).

SFT and RLHF: Pre-training produces a model that ‘knows’ a lot but isn’t useful due to its nature as a pure completion engine; it only predicts the most likely next word rather than following user instructions. Therefore, SFT and RLHF are used to ‘align’ the model. SFT is a process where the model is trained on a high-quality dataset of example conversations and instruction-response pairs to teach it how to be a helpful assistant, and RLHF is a more advanced technique where a separate ‘reward model’ is trained on human preferences, which is then used to further tune the main model to produce outputs that humans find more helpful, honest, and harmless. For Gemini Pro, the SFT and RLHF processes are extremely rigorous, producing a highly capable model.

2. Gemini 2.5 Flash: Engineered for Maximum Efficiency

Gemini 2.5 Flash is optimised for high throughput and low latency at a reduced cost.

Architecture & Model Size: Flash is a deliberately more compact model with a significantly smaller parameter count.

Training Sequence: Pre-training -> knowledge distillation -> SFT and RLHF

Pre-training: The pre-training step is similar to Pro.

Knowledge Distillation: To efficiently align the model, Flash undergoes a “distillation” training step where it learns from a larger “teacher” model (like Pro). The smaller Flash model is trained to replicate the teacher’s output distributions, effectively inheriting its advanced reasoning capabilities in a much more efficient form.

SFT and RLHF: Since Flash is already aligned, a much shorter SFT and RLFH process is applied.

Further optimisation:

Quantization: To further enhance speed, models in this class are often served using quantization. This process reduces the numerical precision of the model’s weights (e.g., from 16-bit to 8-bit integers), which lowers the memory footprint and accelerates computation on modern hardware.

Model Selection in Practice

When selecting a model, start by reviewing the model’s limitations and capabilities. See the linked documentation for compatible input file types, file number and size limits, input token and output token limitations, as well as supported tools. If both Flash and Pro support your use case, we recommend starting by experimenting with Flash (as it’s both faster and cheaper). If the quality of output is unsatisfactory, move over to Pro. Remember that the primary trade-off between Gemini Pro and Flash is between state-of-the-art performance and high efficiency (speed and cost). Therefore, the general rules-of-thumb when picking a model are:

Use Gemini 2.5 Pro for:

Tasks requiring complex, multi-step reasoning and intricate instruction following.

Applications where maximizing output quality is the primary objective, over reduced latency or cost..

Tasks where the output is passed into downstream genAI calls (i.e., the quality of generated content affects the quality of subsequent generated content).

Use Gemini 2.5 Flash for:

Reducing costs for high-volume tasks.

Latency-sensitive applications like real-time chatbots and conversational agents.

Tasks where the output is not persisted or used in subsequent genAI calls.

A note on older models:

Older Gemini models remain available for existing projects but cannot be enabled in new ones. Note that deprecation can be granular, sometimes affecting specific features rather than an entire model version. We strongly recommend using the latest stable models for all new development. For details on model life cycle, refer to the attached documentation.

In the Code:

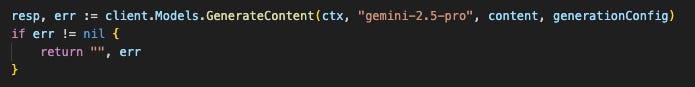

Switching between models in the GenAI Golang SDK is accomplished by changing the model name string in the generation API call:

Further Resources for Learning More:

Gemini vertexAI docs: https://cloud.google.com/vertex-ai/generative-ai/docs/models

Model life cycle docs: https://cloud.google.com/vertex-ai/generative-ai/docs/learn/model-versions

Recommended deep dive into LLMs (training, etc.):

Gen AI Golang SDK: https://pkg.go.dev/google.golang.org/genai

📡 On the Radar: What’s Moving the Needle

A curated look at the articles, papers, resources and updates that are worth your time this week.

Claude 4.5 Sonnet Now on Vertex AI: Anthropic’s latest model, Claude 3.5 Sonnet, is now generally available on Google Cloud’s Vertex AI. This model raises the bar compared to previous versions of Sonnet and even Opus, outperforming previous models on a wide range of evaluations, all while maintaining the speed and cost-effectiveness of a mid-tier model.

From Prompt Engineering to Context Engineering: A new article from Anthropic’s engineering team, “Effective Context Engineering for AI Agents,” highlights a crucial shift in how we should think about building with LLMs. The key takeaway is that the focus is moving beyond simply crafting the perfect prompt to strategically managing the entire context provided to the model. This includes system instructions, tools, and message history. The article provides best practices for providing “minimal high-signal information” to get the best performance from your AI agents.

Building AI Agents from the Ground Up: For those who want to move beyond the high-level abstractions and understand what makes AI agents tick, “AI Agents from First Principles” is a must-read. This article breaks down the fundamental building blocks of AI agents: Prompts, Models, Tools, and Memory. It offers a practical guide to building agents from scratch, emphasizing the importance of a solid foundation and providing best practices for creating efficient and debuggable systems.

🤝 Want to Get Involved in the Community?

This roundtable is driven by its members. To join the conversation, share your work, or ask a question, you have two great options:

Join our private Google Chat space for real-time discussions and to participate in the weekly Open Thread. [Link to Chat Space]

Send a message to our community Google Group at roundtable-community@agentic-ai.build.

We look forward to hearing from you.

The Agentic AI Roundtable Core Team